What is API Scraping?

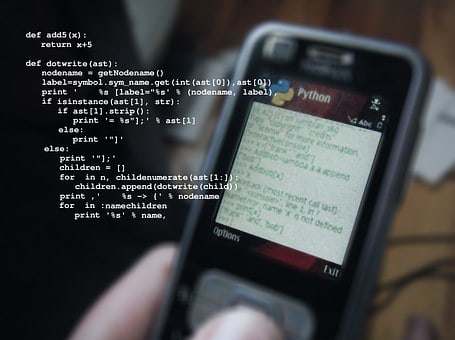

API Scraper also known as API scraping is a powerful technique used to extract data from APIs (Application Programming Interfaces) and is widely used in various industries. In this tutorial, we will learn how to perform API scraping using Python, a popular programming language known for its simplicity and versatility.

Prerequisites:

Before we begin, make sure you have Python installed on your machine. You can download Python from the official website and install it following the instructions provided.

Step 1: Installing Required Libraries

To perform scraping API in Python, we will need to install the requests library, which will help us send HTTP requests to the API and retrieve the data. You can install the requests library using pip, the package manager for Python, by running the following command in your terminal or command prompt:

Step 2: Understanding the API

Before scraping data from an API, it’s essential to understand its documentation. The documentation will provide information about the API’s endpoints, parameters, and how to authenticate requests (if required).

Step 3: Sending a Request to the API

Once you understand the API, you can start sending requests to it using the requests library. Here’s an example of how to send a GET request to an API endpoint and retrieve data:

Step 4: Parsing the Response

After sending a request and receiving a response, you will need to parse the response to extract the data you need. Most APIs return data in JSON format, which can be easily parsed using Python’s built-in JSON module. Here’s an example:

Step 5: Handling Pagination (if necessary)

Some APIs paginate their responses, meaning they only return a subset of data at a time. If the API you’re working with uses pagination, you will need to handle it to retrieve all the data. This usually involves sending multiple requests with different pagination parameters.

Step 6: Error Handling

When scraping data from APIs, it’s essential to handle errors gracefully. This includes checking the response status code for errors and handling them appropriately in your code.

Frequently Asked Questions:

How do you scrape data from an API using Python?

To scrape data from an API using Python, you can use the requests library to send HTTP requests to the API endpoint and receive data in JSON format. You can then parse the JSON response to extract the data you need.

What are the benefits of scraping API?

Scraping API offers benefits such as accessing valuable data for analysis, automation of repetitive tasks, and integration of data from multiple sources into your applications.

Which Python libraries are used for API scraping?

Python libraries commonly used for API scraping include requests, json, beautifulsoup4, and pandas for data manipulation and analysis.

What are some common challenges in API scraping?

Common challenges in API scraping include rate limiting, handling authentication, dealing with pagination, and ensuring data quality and integrity.

How can I ensure that my API scraping is ethical?

To ensure that your API scraping is ethical, you should comply with the API provider’s terms of service, respect data privacy laws, and avoid overloading the API with excessive requests.

What are some real-world applications of API scraping in Python?

Real-world applications of API scraping in Python include monitoring social media trends, collecting financial data for analysis, aggregating product information for e-commerce websites, and gathering weather data for research and forecasting.