15 Best Web Scraping Tools for Extracting Online Data

Since harvesting data manually can be time-consuming and painstaking, a wide range of automated tools have been developed to assist users in making this process fast and smooth. To assist you in making the right decision on the best one to use, we reviewed the best web scraping tools based on these four factors:

- Features: We scrutinized the distinguishing features of each of the web data extractors.

- Deployment method: We evaluated how each of the tools can be deployed—browser extension, cloud, desktop, or any other.

- Output format: We looked at the format each of the tools uses to deliver the scraped content.

- Price: We assessed the cost of using each of the tools.

Ultimately, we created the following list of the 15 best web scraping tools for extracting online data:

- Zenscrape

- Scrapy

- Beautiful Soup

- ScrapeSimple

- Web Scraper

- ParseHub

- Diffbot

- Puppeteer

- Apify

- Data Miner

- Import.io

- Parsers.me

- Dexi.io

- ScrapeHero

- Scrapinghub

Let’s get started with the list of best web scraping tools:

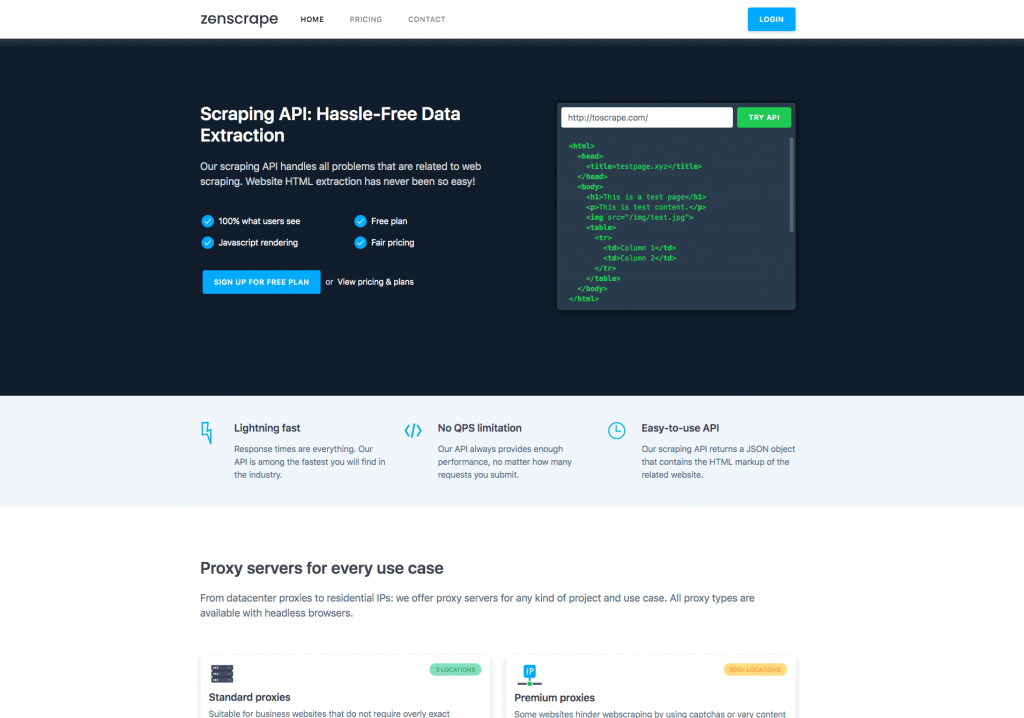

1. Zenscrape (zenscrape.com)

Zenscrape is a hassle-free API that offers lightning-fast and easy-to-use capabilities for extracting large amounts of data from online resources.

Features: It offers excellent features to make web scraping quick and reliable. To provide users with a painless experience, Zenscrape has different proxy servers for each use case. For example, if a website prevents web scraping, you can use its premium proxies, which are available in more than 300 locations, to sidestep the restriction.

Furthermore, it also has a vast pool of more than 30 million IP addresses, which you can use to rotate IP addresses and avoid getting blocked. Zenscrape also extracts data from websites built with any modern programming frameworks, such as React, Angular, or Vue. With Zenscrape, you’ll not need to worry about any queries per second (QPS) limitations.

Deployment method: The Zenscrape scraping API executes requests in modern headless Chrome browsers. This way, websites are rendered using JavaScript just in the same way real browsers complete the rendering, ensuring you retrieve what everyday users see.

Output format: It returns a JSON object that has the HTML markup of the scraped content.

Price: Zenscrape offers different pricing plans to suit every use case. There is a free plan that allows you to make 1,000 requests per month. The paid plans start from $8.99 per year to $199.99 per year. Due to its generous free plan, it is also among the best free web scraping tools.

2. Scrapy (scrapy.org)

Scrapy is an open sourced Python-based framework that offers a fast and efficient way of extracting data from websites and online services.

Features: The Scrapy framework is used to create web crawlers and scrapers for harvesting data from websites. With Scrapy, you can build highly extensible and flexible applications for performing a wide range of tasks, including data mining, data processing, and historical archival.

Getting up and running with Scrapy is easy, mainly because of its extensive documentation and supportive community that can assist you in solving any development challenges.

Furthermore, there are several middleware modules and tools that have been created to help you in making the most of Scrapy. For example, you can use Scrapy Cloud to run your crawlers in the cloud, making it one of the best free web scraping tools.

Deployment method: It can be installed to run on multiple platforms, including Windows, Linux, BSD, and Mac.

Output format: Data can be exported in XML, CSV, or JSON formats.

Price: Scrapy is available for free.

3. Beautiful Soup (https://www.crummy.com/software/BeautifulSoup/)

Beautiful Soup is an open-sourced Python-based library designed to make pulling data from web pages easy and fast.

Features: Beautiful Soup is useful in parsing and scraping data from HTML and XML documents. It comes with elaborate Pythonic idioms for altering, searching, and navigating a parse tree. It automatically transforms the incoming documents and outgoing documents to Unicode and UTF-8 character encodings, respectively.

With just a few lines of code, you can set up your web scraping project using Beautiful Soup and start gathering valuable data. Furthermore, there is a healthy community to assist you in overcoming any implementation challenges. That’s what makes it one of the best web scraping tools.

Deployment method: It can be installed to run on multiple platforms, including Windows, Linux, BSD, and Mac.

Output format: It returns scraped data in HTML and XML formats.

Price: It’s available for free.

4. ScrapeSimple (scrapesimple.com)

ScrapeSimple provides a service that creates and maintains web scrapers according to the customers’ instructions.

Features: ScrapeSimple allows you to harvest information from any website, without any programming skills. After telling them what you need, they’ll create a customized web scraper that gathers information on your behalf. If you want a simple way of scraping online data, then this service could best meet your needs.

Deployment method: It periodically emails you the scraped data.

Output format: Data is delivered in CSV format.

Price: Price depends on the size of each project.

5. Web Scraper (webscraper.io)

Web Scraper is a simple and efficient tool that takes the pain out of web data extraction.

Features: Web Scraper allows you to retrieve data from dynamic websites; it can navigate a site with multiple levels of navigation and extract its content. It implements full JavaScript execution, Ajax requests wait-up, and page scroll-down capabilities to optimize data extraction from modern websites.

Furthermore, Web Scraper has a modular selector system that allows you to create sitemaps from various types of selectors and customize data scraping depending on the structure of each site. You can also use the tool to schedule scraping, rotate IP addresses to prevent blockades, and execute scrapers via an API.

Deployment method: Web Scraper can be deployed as a browser extension or in the cloud.

Output format: Scraped data is returned in the CSV format. You can export it to Dropbox.

Price: The browser extension is provided for free. The paid plans, which come with added features, are priced from $50 per month to more than $300 per month.

6. Parsehub (parsehub.com)

ParseHub is a powerful tool that allows you to harvest data from any dynamic website, without the need of writing any web scraping scripts.

Features: ParseHub provides an easy-to-use graphical interface for collecting data from interactive websites. After specifying the target website and clicking the places you need data to be scraped from, ParseHub’s machine-learning technology takes over with the magic, and pulls out the data in seconds.

Since it supports JavaScript, redirects, AJAX requests, sessions, cookies, and other technologies, ParseHub can be used to scrape data from any type of website, even the most outdated ones. Furthermore, it supports automatic IP rotation, scheduled data collection, data retention for up to 30 days, and regular expressions.

Deployment method: Apart from the web application, it can also be deployed as a desktop application for Windows, Mac, and Linux operating systems.

Output format: Scraped data can be accessed through JSON, Google Sheets, CSV/Excel, Tableau, or API. You can also save images and files to S3 or Dropbox.

Price: You can use Parsehub for free, but you’ll only access a limited number of features. To access more features, you’ll need to go for any of its paid plans, which start from $149 per month to more than $499 per month.

7. Diffbot (diffbot.com)

Diffbot differs from most other web scrapers because it uses computer vision and machine learning technologies (instead of HTML parsing) to harvest data from web pages.

Features: Diffbot uses innovative computer vision technology to visually parse web pages for relevant elements and then outputs them in a structured format. This way, it becomes easier to collect the essential information and discount the elements not valuable to the primary content.

Notably, the Knowledge Graph feature allows you to dig into an extensive interlinked database of various content and retrieve clean, structured data. It also offers dynamic IPs and data storage for up to 30 days.

Deployment method: Diffbot offers a wide range of automatic APIs for extracting data from web articles, discussion forums, and more. For example, you can deploy the Crawlbot API to retrieve data from entire websites.

Output format: It returns the scooped data in various formats, including HTML, JSON, and CSV.

Price: Diffbot offers a 14-day free trial period for testing its products. Thereafter, you can go for any of its paid plans, which start from $299 per month to $3,999 per month.

8. Puppeteer (pptr.dev)

Puppeteer is a Node-based headless browser automation tool often used to retrieve data from websites that require JavaScript for displaying content.

Features: Puppeteer comes with full capabilities for accessing the Chromium or Chrome browser. Consequently, most manual browser tasks can be completed using Puppeteer. For example, you can use the tool to crawl web pages and create pre-rendered content, create PDFs, take screenshots, and automate various tasks. It is backed by Google’s Chrome team and it has an impressive open source community; therefore, you can get quick support in case you have any implementation issues.

Deployment method: It offers a high-level API for taking over the Chromium or Chrome browser. Although Puppeteer runs headless by default, it can be tailored to run non-headless browser.

Output format: It returns extracted data in various formats, including JSON and HTML.

Price: It’s available for free.

9. Apify (apify.com)

Apify is a scalable solution for performing web scraping and automation tasks.

Features: Apify allows you to crawl websites and scoop content using the provided JavaScript code. With the tool, you can extract HTML pages and convert them to PDF, extract Google’s search engine results pages (SERPs), scan web pages and send notifications whenever something changes, extract location information from Google Places, and automate workflows such as filling web forms. It also provides support for Puppeteer.

Deployment method: Apify can be deployed using the Chrome browser, as a headless Chrome in the cloud, or as an API.

Output format: It returns harvested data in various formats, including Excel, CSV, JSON, and PDF.

Price: There is a free 30-day trial version that allows you to test the features of the tool before committing to a monthly plan, which starts from $49 per month to more than $499 per month.

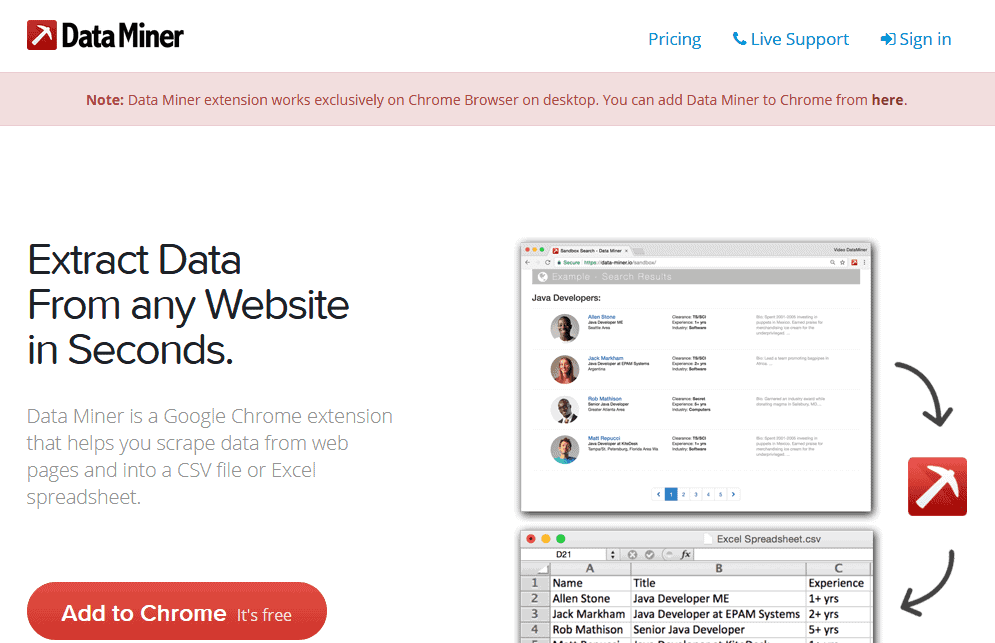

10. Data Miner (data-miner.io)

Data Miner is a simple tool for scraping data from websites in seconds.

Features: With Data Miner, you can extract data with one click (without writing a line of code), run custom extractions, perform bulk scraping based on a list of URLs, extract data from websites with multiple inner pages, and fill forms automatically. You can also use it for extracting tables and lists.

Deployment method: It’s available as a Chrome extension.

Output format: Data Miner exports scraped content into CSV, TSV, XLS, and XLSX files.

Price: You can use the tool for free, but you’ll be limited to 500 pages per month. To get higher scrape limits and more functionalities, you’ll need to go for any of the paid plans, which starts from $19.99 per month to $200 per month.

11. Import.io (import.io)

Import.io eliminates the intricacies of working with web data by allowing you to harvest and structure data from websites easily.

Features: With Import.io, you can leverage web data and make well-informed decisions. It provides a user-friendly interface that allows you to retrieve data from web pages and organize them into datasets. After pointing and clicking at the target content, Import.io uses sophisticated machine learning techniques that learn to harvest them into your dataset. Furthermore, it delivers charts and dashboards to enhance the visualization of the scraped data as well as custom reporting tools to ensure you make the most of the data. You can also use the tool to get website screenshots and obey the stipulations in the robots.txt file.

Deployment method: Import.io can be deployed in the cloud or as an API.

Output format: It delivers retrieved data in various formats, including CSV, JPEG, and XLS.

Price: There is a free version that comes with basic features for extracting web data. If you need advanced features, you’ll need to contact them for specific pricing.

12. Parsers.me (persers.me)

Parsers.me is a versatile web scraping tool that allows you to extract unstructured data with ease.

Features: Parsers.me is designed to extract JavaScript, directories, single data, tables, images, URLs, and other web resources. After selecting the necessary information to be scraped from the target site, the tool automatically completes the process for you. It uses machine learning techniques to get similar pages on the website and retrieve the required information, without the need of specifying elaborate settings. Furthermore, the tool lets you generate charts with analyzed data, schedule the start of scraping, and view scraping history.

Deployment method: It is deployed as a Chrome browser extension.

Output format: It gives results in Excel, JSON, CSV, XML, XLS, or XLSX formats.

Price: You can use Parsers.me for free, but you’ll be limited to 1,000 page scrape credits every month. Beyond the free subscription plan, you can go for any of its paid plans, which starts from $19.99 per month to $199 per month.

13. Dexi.io (dexi.io)

Dexi.io is an intelligent, automated web extraction software that applies sophisticated robot technology to provide users with fast and efficient results.

Features: Dexi.io offers a point-and-click UI for automating the extraction of web pages. The Dexi.io platform has three main types of robots: Extractor, Crawler, and Pipes. Extractors are the most advanced robots used for performing a wide range of tasks, Crawlers are used for gathering a large number of URLs and other basic information from sites, and Pipes are used for automating data processing tasks. Furthermore, Dexi.io provides several other functionalities, including CAPTCHA solving, forms filling, and anonymous scraping through proxy servers.

Deployment method: It’s deployed as a browser-based web application.

Output format: You can save the scooped content directly to various online storage services, or export it as a CSV or JSON file.

Price: Dexi.io offers a wide range of paid plans, which can start from $119 per month to $699 per month.

14. ScrapeHero (scrapehero.com)

ScrapeHero is a fully managed enterprise-grade tool for web scraping and transforming unstructured data into useful data.

Features: ScrapeHero has a large worldwide infrastructure that makes extensive data extraction fast and trouble-free. With the tool, you can perform high-speed web crawling at 3,000 pages per second, schedule crawling tasks, and automate workflows. Furthermore, it handles complicated JavaScript/Ajax websites, solves CAPTCHA, and sidesteps IP blacklisting.

Deployment method: It’s deployed as a browser-based web application.

Output format: Extracted data is delivered in various formats, including XML, Excel, CSV, JSON, as custom APIs, and more.

Price: ScrapeHero’s pricing starts from $50 per month per website. There is also an enterprise plan, which is priced at $1,000 per month. You can also opt for the on-demand plan, which starts at $300 per website.

15. Scrapinghub (scrapinghub.com)

Scrapinghub provides quick and reliable web scraping services for converting websites into actionable data.

Features: Scrapinghub has two categories of tools for extracting data: data services and developer tools. The data services products provide you with accurate capabilities to extract data at any scale and from any website. The developer tools are suited for professional developers and data scientists looking to complete specialized scraping projects. There are four types of developer tools: Crawlera, Extraction API, Splash, and Scrapy Cloud. Furthermore, Scrapinghub is also involved in creating the earlier mentioned Scrapy tool, which is a popular open source web scraper.

Deployment method: Scrapinghub’s tools can be deployed in a variety of methods, including the cloud, desktop, or in the browser.

Output format: They give results in various formats, including JSON, CSV, and XML.

Price: Scrapinghub’s products and services are priced differently. For example, Crawlera, which is designed for ban management and proxy rotation, is priced from $25 per month to more than $1,000 per month.

Wrapping up

That’s our massive list of 15 best web scraping tools for harvesting online content!

The web is the largest information storehouse that man has ever created. Using one good web scraper, you can take unstructured data from the Internet and turn it into a structured format that can easily be consumed by other applications, which greatly enhances business outcomes and enables informed decision making.