If you are a web scraper, you must be aware of the web scraping complexities. At the same time, resolving those complexities become a challenge sometimes. When you are operating in a legal grey area, you are playing a cat-and-mouse game with the website’s owner. To enhance your experience, you can also use a web scraping API with a web scraping proxy.

However, there are a few complexities that you might not be able to resolve sometimes. But, Don’t worry. We have written this blog to help you resolve the complexities using advanced techniques and a web scraping API. By the end of this blog, you will have enough knowledge to be the best web scraper. Let’s move to our content without wasting too much time.

What Are the Complexities of Web Scraping?

Here are some complexities that you can face in most web scraping projects.

Asynchronous Loading and Client-Side Rendering

One complexity of the web scraping process is dealing with websites that use asynchronous loading and client-side rendering. This means that the website loads its content dynamically after the initial HTML document has loaded and that the content may not be visible in the source code of the page.

Authentication

Many websites also have authentication systems that may complicate your web scraping. Authentication can be as simple as storing a cookie or entering a username and password. However, we may need to take care of some subtleties in some cases.

For example:

- Adding fields other than username and password to the POST request.

- Setting headers such as authorization and referees.

Getting responses such as 401, 407, or 403 indicates that we need proper authentication.

Server-Side Blacklisting

You must know that some website owners also implement anti-scraping mechanisms on the server side. It can track browsing patterns and incoming traffic. As a result, the unusual behavior of web scrapers can easily get caught. Let’s figure out some common ways of server-side blacklisting.

Analyzing the Rate of Requests

Some websites also analyze the rate of requests. For example, a certain client X is making Y requests every second. This behavior can be a red flag for website owners. Moreover, it can also lead to temporary or permanent blocking of your IP addresses.

Header Inspection

It is another technique to detect non-human users. Header inspection compares the coming requests to those requests sent by real users.

Honeypots

This method refers to setting traps in the form of links that aren’t visible to the user. The simplest way to do it is to set CSS as display: none. As a result, it can block the scraper eventually when he tries to extract the data.

Pattern Detection

This method is implemented to check the browsing patterns, such as the number of clicks by the user. The anti-crawling mechanism is implemented on the server end. You can detect server-side blacklisting if you are getting the response codes 403, 503, and 429.

Redirects and Captchas

Some websites also redirect the users to a captcha link, which will prove if the browsing agent is a human or a robot. Such a system is implemented in Cloudflare, which can make it hard to get the actual component.

Structural Complexities

Here are some structural complexities that you must always know.

Complicated Navigation

When scraping a website, complicated navigation can make it challenging to extract the desired information.

Unstructured HTML

Unstructured HTML can make it difficult to extract data from a website as the data may not be organized in a way that is easy to parse.

Iframe Tags

Iframe tags can be used to embed content from another website within a webpage.

How to Resolve the Complexities of Web Scraping With Python?

Here are the tricks you can use to resolve the complexities described above.

Choosing the Right Frameworks, Tools, and Libraries

There are several frameworks, tools, and libraries available that can simplify web scraping projects. Popular choices include BeautifulSoup, Scrapy, Selenium, and Requests.

According to research, BeautifulSoup and Requests make the perfect pair for less complicated projects. On the other hand, Scrapy works best for larger scraping projects.

You should also choose a reliable proxy provider like Zenscrape to get the correct web data. It can give you residential proxies for web scraping to mask residential IP addresses. However, you can find many other proxy providers online for rotating residential proxies.

Let’s explore some most popular tools for web scraping.

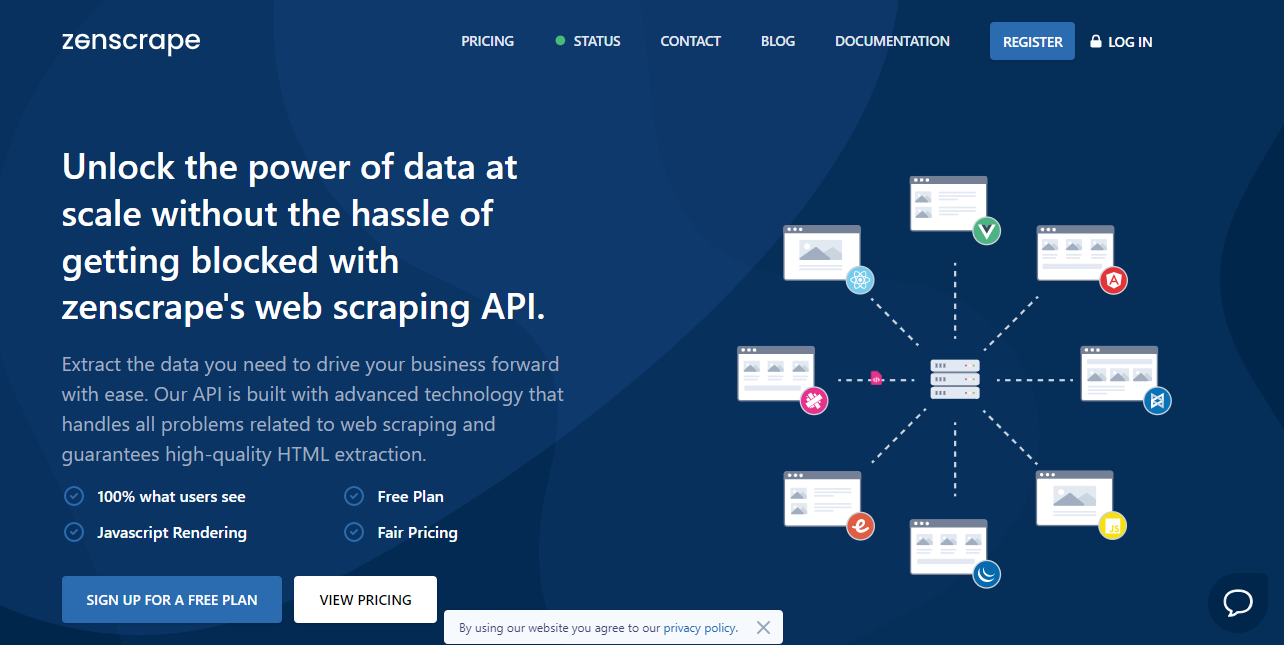

Zenscrape

Zenscrape is a powerful web scraping tool that enables users to extract data from websites quickly and efficiently. Whether you need to collect data for market research, competitor analysis, or any other purpose, Zenscrape provides a reliable solution.

Its user-friendly interface and robust features, Zenscrape simplifies the web scraping process. Hence, allowing users to focus on extracting valuable insights from the web.

One notable feature of Zenscrape is its ability to handle JavaScript rendering, making it suitable for scraping dynamic websites that rely on client-side rendering.

This means that even if a website heavily relies on JavaScript to load and display its content, Zenscrape can still scrape the data effectively.

The tool supports all frameworks, IP rotation, CAPTCHA handling, and High Concurrency. Hence, ensuring that scraping tasks can be performed smoothly and without disruptions.

Fivetran

Fivetran is another data integration platform that collects and centralizes data from various sources. It connects over 150 data sources, allowing businesses to consolidate data from different systems into a unified and structured format.

Fivetran’s automated data pipelines simplify the data integration process, ensuring businesses can access real-time and accurate data for their analytics and reporting needs.

Hevo Data

Hevo Data is a cloud-based data integration platform that enables organizations to collect, transform, and load data from various sources into a data warehouse or other destinations. It supports batch and real-time data ingestion, making it suitable for organizations with different data integration requirements.

Hevo Data offers pre-built connectors for popular data sources and the ability to build custom connectors, providing flexibility and scalability to handle complex data integration scenarios.

Dataddo

Dataddo is a data integration and analytics platform that automates data extraction, transformation, and loading processes. It allows users to connect to various data sources, transform the data using a visual interface, and load it into a chosen destination.

Dataddo’s platform supports batch and real-time data integration, and it offers features like data mapping, scheduling, and data quality checks to ensure the accuracy and reliability of the integrated data.

Bright Data

Bright Data, formerly known as Luminati Networks, is a leading provider of web data collection solutions. It offers a comprehensive suite of tools and services for web scraping, including a large proxy network, CAPTCHA handling, and data extraction capabilities.

Bright Data’s proxy network spans over 200 locations worldwide, enabling users to access geographically restricted data and overcome IP blocking. The company’s solutions cater to various use cases, from brand monitoring and market research to ad verification and price intelligence.

Zenscrape is one of the best proxy providers with the following use cases:

- Web Crawling

- Price Data Scraping

- Marketing Data Scraping

- Review scraping

- Hiring Data Scraping

- Real estate data scraping

Handling Authentication

You can use APIs or libraries that handle authentication, such as OAuth or requests-OAuth lib. This is simple in the case of simple authentication, which is very less common these days.

Handle Asynchronous Loading

Asynchronous loading can make it difficult to extract data from websites. Here is how you can deal with it.

Detecting Asynchronous Loading

You can detect it by viewing the page source through a right-click on the web page. Furthermore, you can also inspect it using the browser’s network tool.

Getting Around Asynchronous Loading

You can follow the below ways to get around the asynchronous loading.

Using a Web Driver

Web drivers like Selenium can help automate web interactions and enable web scraping for websites that require user interaction.

Inspecting Ajax Calls

Inspecting Ajax calls can help identify the data sources used by a website and help in web scraping. In this case, we may need to set an X-Requested-With header that can help us mimic AJAX requests in our script.

Tackling Infinite Scrolling

Infinite scrolling can be tackled by using techniques like scrolling the page using JavaScript or analyzing the network traffic to identify the requests made by the website.

Find the Right Selectors

Choosing the right selectors is important to extract data accurately. You can use tools like Chrome DevTools or Firebug to inspect the website’s HTML structure. You can also use CSS selectors for this purpose.

Tackling Server-Side Blacklisting

In our previous section, we discussed some methods of server-side blacklisting. It can block us from extracting the data through a website. You can use any of the following ways to tackle server-side blacklisting.

- Using Proxy Servers and IP Proxy Rotation

- User-Agent Spoofing and Rotation

- Reducing the Crawling Rate

Handling Redirects and Captchas

Handling redirects and captchas can be a challenge in web scraping, but there are several techniques that can be used to tackle them.

Most modern web scraping libraries, like Requests and Scrapy, already handle redirects by following through them and returning the final page. However, it is important to check the status code of the final page to ensure that it is the page you were looking for.

Captchas are designed to prevent bots from accessing websites, and they can be a challenge to handle in web scraping. One way to handle simple text-based captchas is to use OCR (Optical Character Recognition). More complex captchas, such as image-based captchas, may require more advanced techniques, like using machine learning algorithms to solve them.

Handling Iframe Tags and Unstructured Responses

Iframe tags are used to embed content from another source within a webpage. To scrape content from an iframe, you need to first locate the iframe tag and then access its source URL. Once you have the source URL, you can scrape the content from the iframe just like any other webpage.

Conclusion

Web scraping is a powerful tool for extracting data from websites. However, it can be challenging due to the structural complexities of modern web pages. Advanced techniques like using proxies can help overcome some of these challenges by enabling you to mask your same IP address. Moreover, it can also avoid rate limiting and prevent detection by websites.

Python provides a rich ecosystem of libraries and tools for web scraping. You can build robust and scalable web scraping systems by combining these with advanced techniques like proxies. However, it is important to use these techniques responsibly and ethically and to respect the target website’s terms of service and legal restrictions.

FAQs

Do I Need a Proxy for Web Scraping?

Using a residential proxy network for web scraping can help avoid detection. Moreover, it can also help access restricted content and prevent rate limiting and IP bans through rotating proxies.

How Do You Use a Proxy in Web Scraping?

To use a proxy manager in web scraping, configure the scraping tool or library to make requests through the proxy server. You can choose residential and datacenter proxies to access websites through proxy IP.

Is a VPN or Proxy Better for Web Scraping?

A residential proxy is better for web scraping as it provides more control and flexibility compared to a VPN.

What Do Scraping Proxies Do?

Scraping proxies mask your IP address to avoid detection, avoid rate limiting, and distribute requests across multiple IP addresses.

Sign Up at Zenscrape and scrape different websites to enhance your development experience.